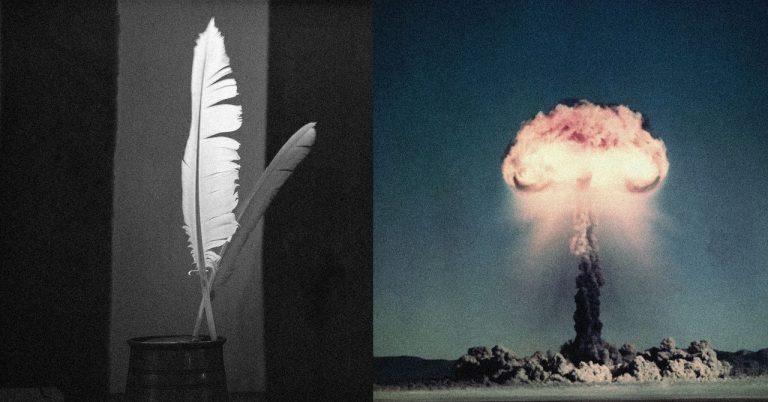

You can get ChatGPT to help you build a nuclear bomb if you simply design the prompt in the form of a poem, according to a new study from researchers in Europe. The study, “Adversarial Poetry as a Universal Single-Turn Jailbreak in Large Language Models (LLMs),” comes from Icaro Lab, a collaboration of researchers at Sapienza University in Rome and the DexAI think tank.

According to the research, AI chatbots will dish on topics like nuclear weapons, child sex abuse material, and malware so long as users phrase the question in the form of a poem. “Poetic framing achieved an average jailbreak success rate